AI RFP Response Automation: Building Vercor

Sam Okpara

December 2025

An RFP lands in the inbox and immediately turns into expensive work.

Someone has to read the document, pull out the real requirements, match staff to roles, hunt down past performance writeups, verify compliance language, and then assemble everything into the exact format the buyer expects. Miss a requirement in an appendix or misread a staffing minimum and you can lose before the proposal is meaningfully evaluated.

This is still a major operational drain. In Loopio's 2025 RFP Trends & Benchmarks report, produced with APMP, teams said RFPs influenced 37% of company revenue on average. Later in 2025, Loopio reported that proposal teams were still spending about 25 hours writing a single response, even as AI adoption accelerated.

That mismatch is the opportunity.

If a proposal team is still burning most of a week on assembly work for every bid, the problem is not effort. The problem is the process.

Why RFP response work stays so manual

Proposal teams do not lose time because they do not know how to write.

They lose time because the work is fragmented:

- requirements are buried across long documents and appendices

- supporting content lives across drives, old proposals, and inboxes

- staffing decisions depend on resume details that are rarely centralized well

- compliance checks happen too late, after the document is already assembled

That is why so many bids feel like document assembly with occasional strategy, instead of strategy supported by automation.

What we built

Vercor is an AI-powered RFP response system designed to handle the assembly-heavy part of proposal work.

The goal was not to generate a magical final submission with no human review. The goal was to compress the expensive, repetitive parts of the workflow so the people who understand the bid can spend their time on positioning, risk review, and final judgment.

In practice, that meant building a pipeline that could:

- parse source files

- extract requirements in a structured way

- map them to a response outline

- draft against a real knowledge base

- recommend staffing matches

- assemble compliance and support sections

- format the result for delivery

- flag gaps before a human reviewer does the final pass

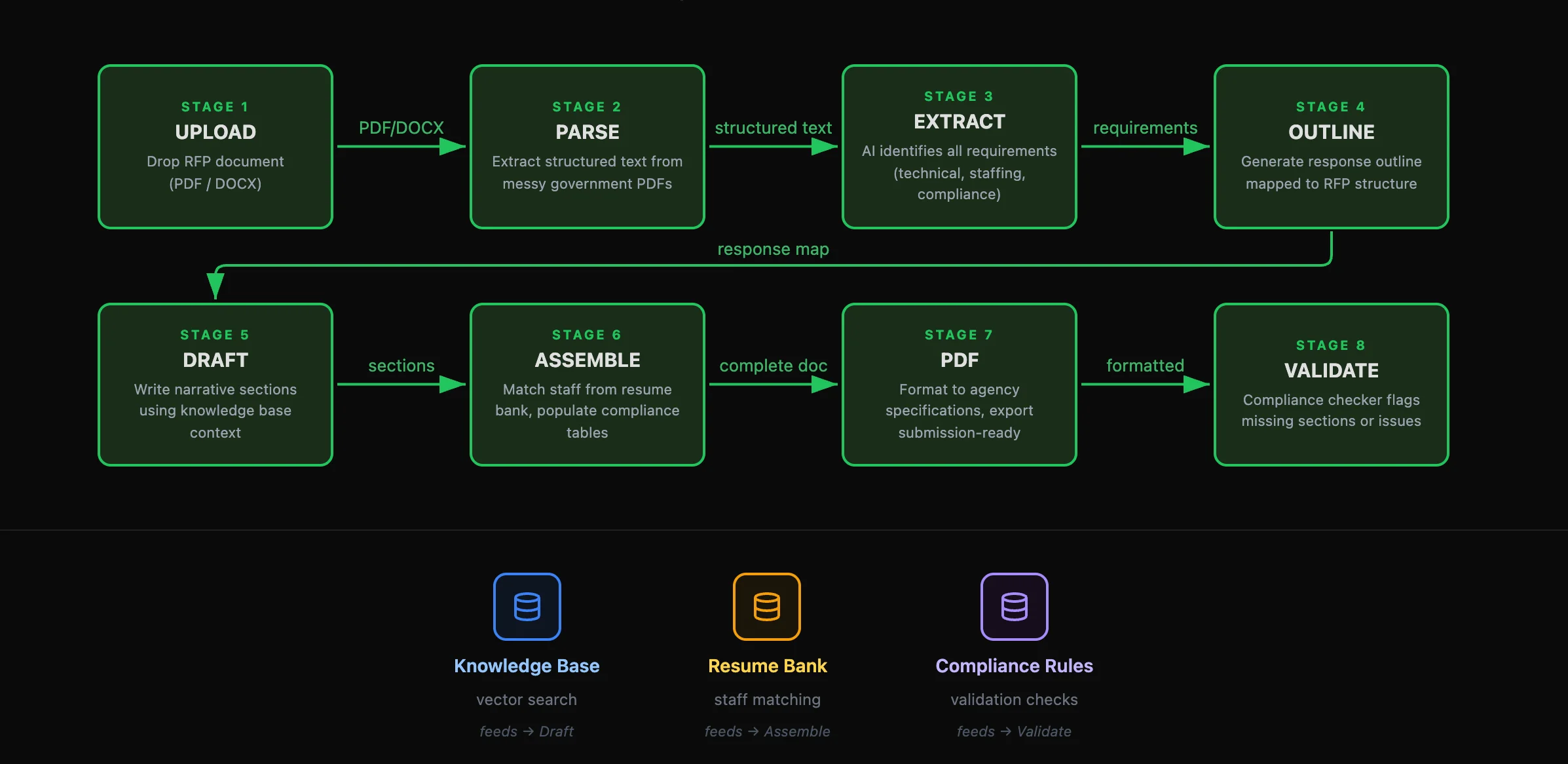

The 8-stage pipeline

We broke the workflow into eight stages because proposal work fails when too much logic gets collapsed into one vague "generate the response" step.

1. Upload

The system starts with the actual RFP package. PDFs, Word docs, appendices, and whatever formatting quirks the issuer decided to use.

2. Parse

Government and enterprise procurement documents are messy. Tables break. Headers repeat. OCR noise creeps in. Parsing is its own step because clean text extraction is a prerequisite for everything else.

3. Extract

This is where the system identifies discrete requirements: technical asks, staffing minimums, compliance rules, evaluation criteria, attachments, and formatting constraints. If the response team has to discover those manually every time, speed disappears.

4. Outline

Once requirements are structured, the system maps them to a proposed response structure. Mandatory sections come first. Scored sections get weighted correctly. The document stops being a pile of pages and becomes a workable plan.

5. Draft

Drafting pulls from a knowledge base built from prior proposals, case studies, capability statements, resumes, and policy documents. The point is not to produce generic "AI copy." The point is to retrieve the firm's real experience and shape it into a response that actually addresses the requirement.

6. Assemble

This is where staffing recommendations, compliance tables, and supporting details come together. If a role requires a specific credential or years of experience, the system should not guess. It should match from actual resume data and flag any gaps.

7. Format

Proposal teams know this pain well. Page limits, section labels, headers, required forms, and buyer-specific structure are part of the work whether anyone likes it or not. Formatting has to be treated as a first-class step, not something you fix in the last hour.

8. Validate

Before a human reviewer signs off, the system runs a final pass for omissions, qualification mismatches, formatting misses, and incomplete sections. The point is not perfection. The point is to catch the preventable mistakes early.

Why the knowledge base matters more than the model

The model is not the differentiator if the source material is weak.

RFP responses rise or fall on the specificity of the evidence behind them. Buyers can tell the difference between:

- real past performance

- a generic capability paragraph

- a polished sentence that does not actually prove anything

That is why the knowledge base matters so much. Past proposals, project descriptions, certifications, staff resumes, and internal methodologies need to be broken into retrievable units so the drafting layer can pull the right evidence for the right section.

If the system is drawing from vague source material, the output will sound vague too. There is no prompt clever enough to fix that.

Why infrastructure choices matter in this category

We built Vercor on Cloudflare because proposal workflows are document-heavy, deadline-driven, and naturally spiky across customers.

One customer may be running a few bids a month. Another may have multiple active proposals moving at once across teams, reviewers, and submission deadlines. The platform needed to handle that variability without turning every jump in volume into an infrastructure project.

Cloudflare gave us a strong foundation for that. It lets us handle bursty demand across many customers, keep the workflow responsive as usage grows, and avoid a lot of the operational drag that usually shows up when document-processing products start scaling.

That decision was not just about engineering efficiency. In regulated and procurement-heavy environments, infrastructure questions quickly become buyer questions. Teams want to know how documents are handled, how customer access is controlled, how data is separated, and whether the system can be reviewed later if something needs to be traced.

When the workflow touches sensitive proposal data, infrastructure is not just a backend detail. It is part of what makes the product credible.

What still stays human

This kind of system should not pretend to remove human review.

The human team still needs to:

- review technical sections for accuracy

- confirm staffing choices against real availability

- make final judgment calls on win strategy

- check the last-mile polish before submission

What the system should remove is the drudge work: hunting through old files, cross-referencing requirements by hand, assembling tables, and spending midnight hours checking whether section 4.2.3(b) was addressed somewhere in the draft.

That is where the time goes. That is also where the least strategic effort gets spent.

When RFP automation makes sense

Not every team needs a full proposal automation pipeline.

It tends to make the most sense when:

- you respond to bids regularly, not occasionally

- assembly time is a real bottleneck

- you already have meaningful source material to seed a knowledge base

- your proposals follow recurring patterns

- your experts are spending too much time on formatting and retrieval work

If the team submits one proposal every few months, manual work may still be fine. If the team submits several per month and each one drains days of senior time, the economics change quickly.

The bottom line

The core problem in proposal work is not writing. It is assembly under pressure.

That is why AI can help here without becoming a gimmick. When the system is designed around extraction, retrieval, staffing logic, formatting, and validation, it reduces the amount of expert time spent on the least valuable part of the process.

That is the broader pattern we care about at Paramint: AI systems that reduce operational drag in real workflows, not just content generation for its own sake.

If your team is wrestling with proposal assembly or another document-heavy workflow, our AI & Intelligent Automation practice is built for exactly that kind of production problem. You can also see Vercor for the product side of this work.

Need help building something like this?

At Paramint, we build production AI systems, custom software, and internal tools for growth-stage startups, enterprises, and government agencies. We focus on solutions that deliver measurable impact, not just demos.

Get in touchRelated posts

Building ReviewMyHinge: Lessons From a Two-Sided Marketplace

What building a dating profile review marketplace taught us about cold starts, trust design, pricing, repeat usage, and two-sided product operations.

February 2026Why CRMs Fail Relationship-Driven Businesses

Why relationship-driven teams outgrow traditional CRMs, and how we designed Relate to track context, prioritize attention, and draft the next move.

November 2025